方法

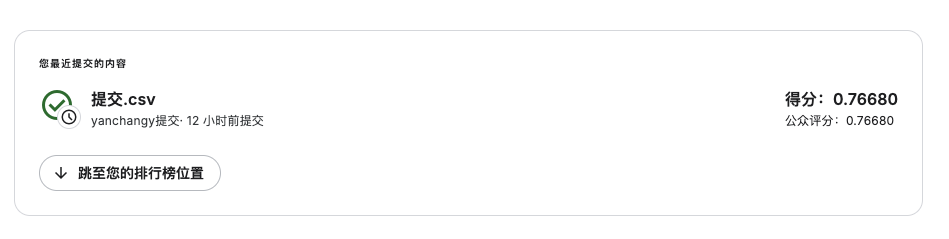

采用了在resnet18上进行微调,修改最后一层全连接层,解冻最后两个残差块。不知道为什么最后还是过拟合了,大概训练几十个批次后验证集精度卡在了0.76附近,没办法了睡觉了。

代码

import collections

import math

import os

import shutil

import pandas as pd

import torch

import torchvision

from torch import nn

from d2l import torch as d2l

#@save

d2l.DATA_HUB['cifar10_tiny'] = (d2l.DATA_URL + 'kaggle_cifar10_tiny.zip',

'2068874e4b9a9f0fb07ebe0ad2b29754449ccacd')

data_dir = '../data/cifar-10/'

#@save

def read_csv_labels(fname):

"""读取fname来给标签字典返回一个文件名"""

with open(fname, 'r') as f:

# 跳过文件头行(列名)

lines = f.readlines()[1:]

tokens = [l.rstrip().split(',') for l in lines]

return dict(((name, label) for name, label in tokens))

labels = read_csv_labels(os.path.join(data_dir, 'trainLabels.csv'))

print('# 训练样本 :', len(labels))

print('# 类别 :', len(set(labels.values())))

#@save

def copyfile(filename, target_dir):

"""将文件复制到目标目录"""

os.makedirs(target_dir, exist_ok=True)

shutil.copy(filename, target_dir)

#@save

def reorg_train_valid(data_dir, labels, valid_ratio):

"""将验证集从原始的训练集中拆分出来"""

# 训练数据集中样本最少的类别中的样本数

n = collections.Counter(labels.values()).most_common()[-1][1]

# 验证集中每个类别的样本数

n_valid_per_label = max(1, math.floor(n * valid_ratio))

label_count = {}

for train_file in os.listdir(os.path.join(data_dir, 'train')):

label = labels[train_file.split('.')[0]]

fname = os.path.join(data_dir, 'train', train_file)

copyfile(fname, os.path.join(data_dir, 'train_valid_test',

'train_valid', label))

if label not in label_count or label_count[label] < n_valid_per_label:

copyfile(fname, os.path.join(data_dir, 'train_valid_test',

'valid', label))

label_count[label] = label_count.get(label, 0) + 1

else:

copyfile(fname, os.path.join(data_dir, 'train_valid_test',

'train', label))

return n_valid_per_label

def train_batch_ch13(net, X, y, loss, trainer, devices):

"""用多GPU进行小批量训练"""

if isinstance(X, list):

# 微调BERT中所需

X = [x.to(devices[0]) for x in X]

else:

X = X.to(devices[0])

y = y.to(devices[0])

net.train()

trainer.zero_grad()

pred = net(X)

l = loss(pred, y)

l.sum().backward()

trainer.step()

train_loss_sum = l.sum()

train_acc_sum = d2l.accuracy(pred, y)

return train_loss_sum, train_acc_sum

#@save

def reorg_test(data_dir):

"""在预测期间整理测试集,以方便读取"""

for test_file in os.listdir(os.path.join(data_dir, 'test')):

copyfile(os.path.join(data_dir, 'test', test_file),

os.path.join(data_dir, 'train_valid_test', 'test',

'unknown'))

def reorg_cifar10_data(data_dir, valid_ratio):

labels = read_csv_labels(os.path.join(data_dir, 'trainLabels.csv'))

reorg_train_valid(data_dir, labels, valid_ratio)

reorg_test(data_dir)

batch_size = 512

valid_ratio = 0.1

reorg_cifar10_data(data_dir, valid_ratio)

transform_train = torchvision.transforms.Compose([

# 在高度和宽度上将图像放大到40像素的正方形

torchvision.transforms.Resize(40),

# 随机裁剪出一个高度和宽度均为40像素的正方形图像,

# 生成一个面积为原始图像面积0.64~1倍的小正方形,

# 然后将其缩放为高度和宽度均为32像素的正方形

torchvision.transforms.RandomResizedCrop(32, scale=(0.64, 1.0),

ratio=(1.0, 1.0)),

torchvision.transforms.RandomHorizontalFlip(),

torchvision.transforms.ToTensor(),

# 标准化图像的每个通道

torchvision.transforms.Normalize([0.4914, 0.4822, 0.4465],

[0.2023, 0.1994, 0.2010])])

transform_test = torchvision.transforms.Compose([

torchvision.transforms.ToTensor(),

torchvision.transforms.Normalize([0.4914, 0.4822, 0.4465],

[0.2023, 0.1994, 0.2010])])

train_ds, train_valid_ds = [torchvision.datasets.ImageFolder(

os.path.join(data_dir, 'train_valid_test', folder),

transform=transform_train) for folder in ['train', 'train_valid']]

valid_ds, test_ds = [torchvision.datasets.ImageFolder(

os.path.join(data_dir, 'train_valid_test', folder),

transform=transform_test) for folder in ['valid', 'test']]

train_iter, train_valid_iter = [torch.utils.data.DataLoader(

dataset, batch_size, shuffle=True, drop_last=True,num_workers=8, # 建议设为CPU核心数或更高

pin_memory=True )

for dataset in (train_ds, train_valid_ds)]

valid_iter = torch.utils.data.DataLoader(valid_ds, batch_size, shuffle=False,

drop_last=True)

test_iter = torch.utils.data.DataLoader(test_ds, batch_size, shuffle=False,

drop_last=False)

def get_net():

net=torchvision.models.resnet18(pretrained=True)

#net.fc = nn.Linear(net.fc.in_features, 10)

net.fc = nn.Sequential(

nn.Dropout(p=0.5), # 丢弃50%神经元,概率可调

nn.Linear(net.fc.in_features, 10)

)

for param in net.parameters():

param.requires_grad = False

for param in net.layer4.parameters(): # 解冻最后一层

param.requires_grad = True

for param in net.layer3.parameters(): # 解冻最后二层

param.requires_grad = True

for param in net.fc.parameters(): # 必须单独解冻fc

param.requires_grad = True

return net

def evaluate_accuracy_gpu(net, data_iter, device=None): #@save

"""使用GPU计算模型在数据集上的精度"""

if isinstance(net, nn.Module):

net.eval() # 设置为评估模式

if not device:

device = next(iter(net.parameters())).device

# 正确预测的数量,总预测的数量

metric = d2l.Accumulator(2)

with torch.no_grad():

for X, y in data_iter:

if isinstance(X, list):

# BERT微调所需的(之后将介绍)

X = [x.to(device) for x in X]

else:

X = X.to(device)

y = y.to(device)

metric.add(d2l.accuracy(net(X), y), y.numel())

return metric[0] / metric[1]

def train(net, train_iter, valid_iter, num_epochs, lr, wd, devices, lr_period,

lr_decay):

loss = nn.CrossEntropyLoss(reduction="none")

# 或分层学习率方案(如需不同学习率)

fc_param_names = {name for name, _ in net.named_parameters() if "fc" in name}

backbone_params = [

p for name, p in net.named_parameters()

if p.requires_grad and name not in fc_param_names # 按名称过滤

]

fc_params = [

p for name, p in net.named_parameters()

if p.requires_grad and name in fc_param_names

]

trainer = torch.optim.SGD([

{"params": backbone_params, "lr": lr}, # 骨干网络

{"params": fc_params, "lr": lr * 10} # 全连接层

], momentum=0.9, weight_decay=wd)

# params_1x = [param for name, param in net.named_parameters()

# if name not in ["fc.weight", "fc.bias"]]

# trainer = torch.optim.SGD([{'params': params_1x},

# {'params': net.fc.parameters(),

# 'lr': lr * 10}],

# lr=lr, momentum=0.9,weight_decay=wd)

scheduler = torch.optim.lr_scheduler.StepLR(trainer, lr_period, lr_decay)

num_batches, timer = len(train_iter), d2l.Timer()

legend = ['train loss', 'train acc']

if valid_iter is not None:

legend.append('valid acc')

animator = d2l.Animator(xlabel='epoch', xlim=[1, num_epochs],

legend=legend,ylim=[0,1])

net = nn.DataParallel(net, device_ids=devices).to(devices[0])

print(devices[0])

for epoch in range(num_epochs):

net.train()

metric = d2l.Accumulator(3)

for i, (features, labels) in enumerate(train_iter):

timer.start()

l, acc = d2l.train_batch_ch13(net, features, labels,

loss, trainer, devices)

metric.add(l, acc, labels.shape[0])

timer.stop()

if (i + 1) % (num_batches // 5) == 0 or i == num_batches - 1:

#animator.add(epoch + (i + 1) / num_batches,(metric[0] / metric[2], metric[1] / metric[2],None))

print(f'epoch: {epoch} train loss {metric[0] / metric[2]:.3f}, train acc {metric[1] / metric[2]:.3f}')

if valid_iter is not None:

valid_acc = d2l.evaluate_accuracy_gpu(net, valid_iter)

#animator.add(epoch + 1, (None, None, valid_acc))

print(f'epoch: {epoch} valid acc {valid_acc}')

scheduler.step()

measures = (f'train loss {metric[0] / metric[2]:.3f}, 'f'train acc {metric[1] / metric[2]:.3f}')

if valid_iter is not None:

measures += f', valid acc {valid_acc:.3f}'

print(measures + f'\n{metric[2] * num_epochs / timer.sum():.1f}'f' examples/sec on {str(devices)}')

devices, num_epochs, lr, wd = d2l.try_all_gpus(), 500, 5e-5, 9e-3

lr_period, lr_decay, net = 5, 0.85, get_net()

train(net, train_iter, valid_iter, num_epochs, lr, wd, devices, lr_period,

lr_decay)

preds = []

for X, _ in test_iter:

y_hat = net(X.to(devices[0]))

preds.extend(y_hat.argmax(dim=1).type(torch.int32).cpu().numpy())

sorted_ids = list(range(1, len(test_ds) + 1))

sorted_ids.sort(key=lambda x: str(x))

df = pd.DataFrame({'id': sorted_ids, 'label': preds})

df['label'] = df['label'].apply(lambda x: train_valid_ds.classes[x])

df.to_csv('submission.csv', index=False)

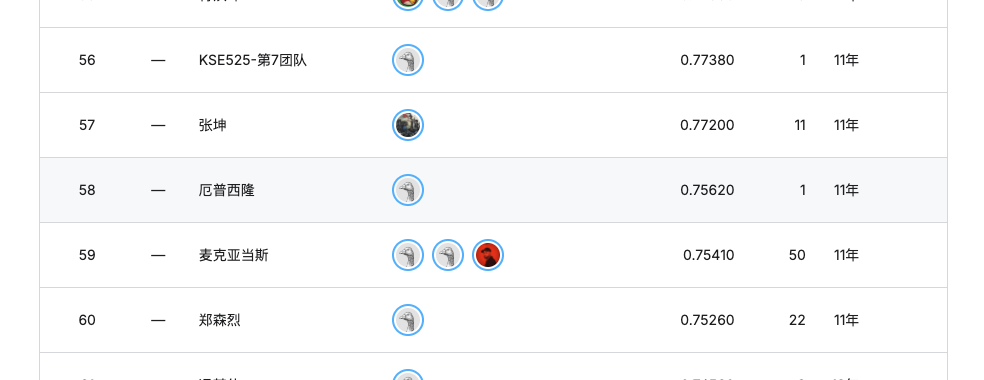

排名